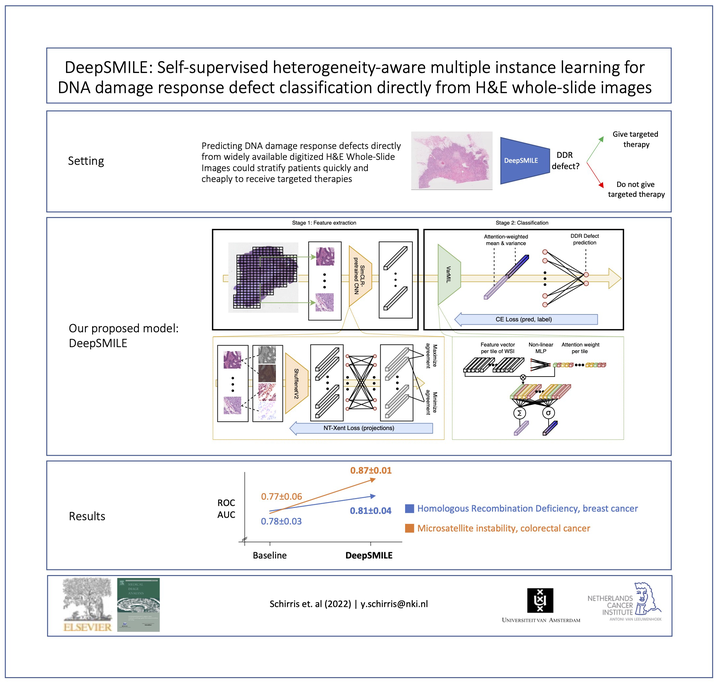

DeepSMILE: Contrastive self-supervised pre-training benefits MSI and HRD classification directly from H&E whole-slide images in colorectal and breast cancer

Abstract

We propose a Deep learning-based weak label learning method for analyzing whole slide images (WSIs) of Hematoxylin and Eosin (H&E) stained tumor tissue not requiring pixel-level or tile-level annotations using Self-supervised pre-training and heterogeneity-aware deep Multiple Instance LEarning (DeepSMILE). We apply DeepSMILE to the task of Homologous recombination deficiency (HRD) and microsatellite instability (MSI) prediction. We utilize contrastive self-supervised learning to pre-train a feature extractor on histopathology tiles of cancer tissue. Additionally, we use variability-aware deep multiple instance learning to learn the tile feature aggregation function while modeling tumor heterogeneity. For MSI prediction in a tumor-annotated and color normalized subset of TCGA-CRC (n=360 patients), contrastive self-supervised learning improves the tile supervision baseline from 0.77 to 0.87 AUROC, on par with our proposed DeepSMILE method. On TCGA-BC (n=1041 patients) without any manual annotations, DeepSMILE improves HRD classification performance from 0.77 to 0.81 AUROC compared to tile supervision with either a self-supervised or ImageNet pre-trained feature extractor. Our proposed methods reach the baseline performance using only 40% of the labeled data on both datasets. These improvements suggest we can use standard self-supervised learning techniques combined with multiple instance learning in the histopathology domain to improve genomic label classification performance with fewer labeled data.